The digitization of images and information from herbarium specimens is broadening their use and providing an important resource for phenotypic and phenological studies. As of spring 2020, one key repository, iDigBio, has over 19 million digitized specimens. High quality annotated images can be used to train machine learning algorithms to automate certain digital image-based tasks, saving time down the road. However, the initial annotation requires a high investment of person-hours, and is often done by volunteers. For this reason, it is important to find the easiest and most efficient ways of allowing the volunteers to complete their tasks.

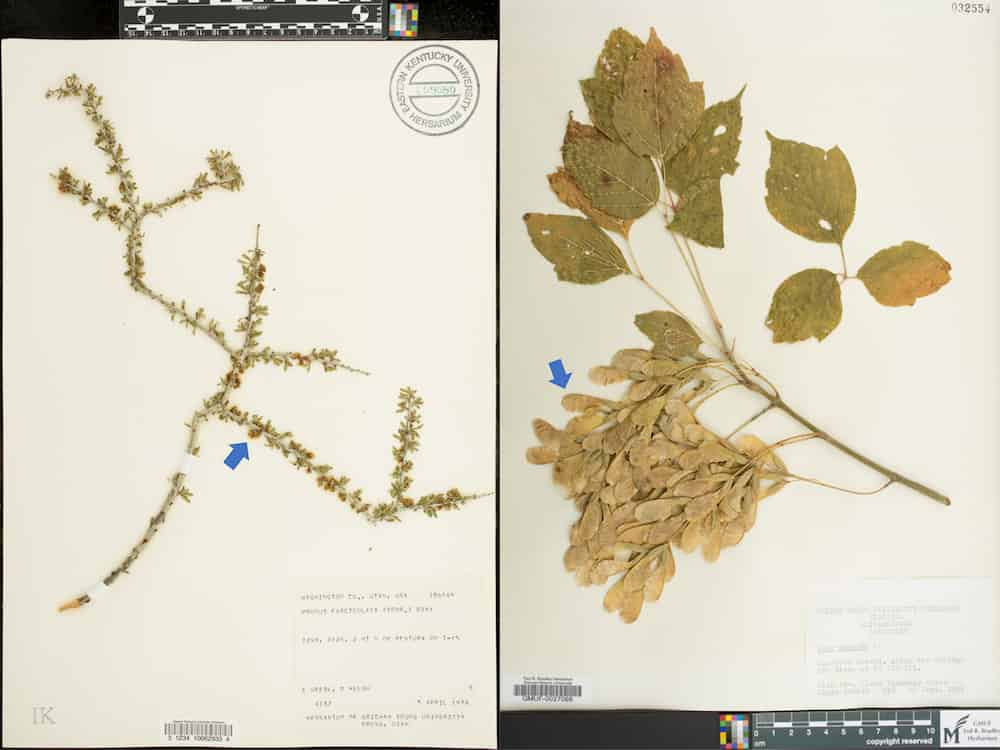

In a new article published in Applications in Plant Sciences’ Machine Learning in Plant Biology special issue, lead author Laura Brenskelle and colleagues used a high quality, pre-annotated data set containing 3000 species each of either Prunus or Acer specimens to test volunteers’ annotation accuracy of phenological traits under two different conditions.

In the first, scorers appeared in-person and were given a 15 minute training session as well as a handbook with illustrations and examples. In the second, scorers used the online platform Notes from Nature and were given the instructional handbook with no additional training. In the second setting, a specimen required three annotations and was allotted to the annotation agreed upon by two scorers. The authors then investigated the influence of such factors as the traits and taxa being scored, as well as the botanical expertise, academic career level, and speed of the individual scorers.

Surprisingly, the results showed that botanical expertise, career level, and speed were not important factors in the scorers’ accuracy. Instead, accuracy was governed by the traits and taxa being scored, whether the person scored in-person or online, and the individual themselves… some people were just more accurate regardless of other factors. Overall, those appearing in person were significantly more accurate, though both groups did reasonably well. “We think there are two main factors that may have contributed to the slight lag in online annotation accuracy – training and image quality,” explains Brenskelle, who emphasizes comprehensive training booklets and the ability to zoom in on the details of an image. She also notes that triplicate scoring improved online accuracy by three percent. “This is another way to improve online annotation accuracy, though it does require three times the amount of completed annotations.”

Though the traits being scored in this study were straightforward, Brenskelle is confident that volunteers can be effectively trained for more complex annotation tasks. “[T]he traits in this study were relatively simple presence/absence annotations. There are countless more complex annotation tasks I can imagine researchers would be interested in, especially for plant phenology. I do think, with proper volunteer training, our general approach would work for more complex traits,” she says. “I think the biggest challenge you would run into with more complex traits would be having images that show an appropriate level of detail for the things you’re asking volunteers to score. Aside from that challenge, I think if you developed training handbooks with visual examples, that would enable volunteers to score more complex traits.”